Harness Engineering is using a durable set of components to apply an LLM to an AI-ready Workspace.

Harness Engineering is the practice of managing the inputs, outputs and sensors surrounding a large language model using control theory to reach a desired outcome. The industry writing agrees than an Agent is the Model and the Harness (Agent = Model + Harness). Birgitta Böckeler of Thoughtworks defines a Harness as "everything but the model."

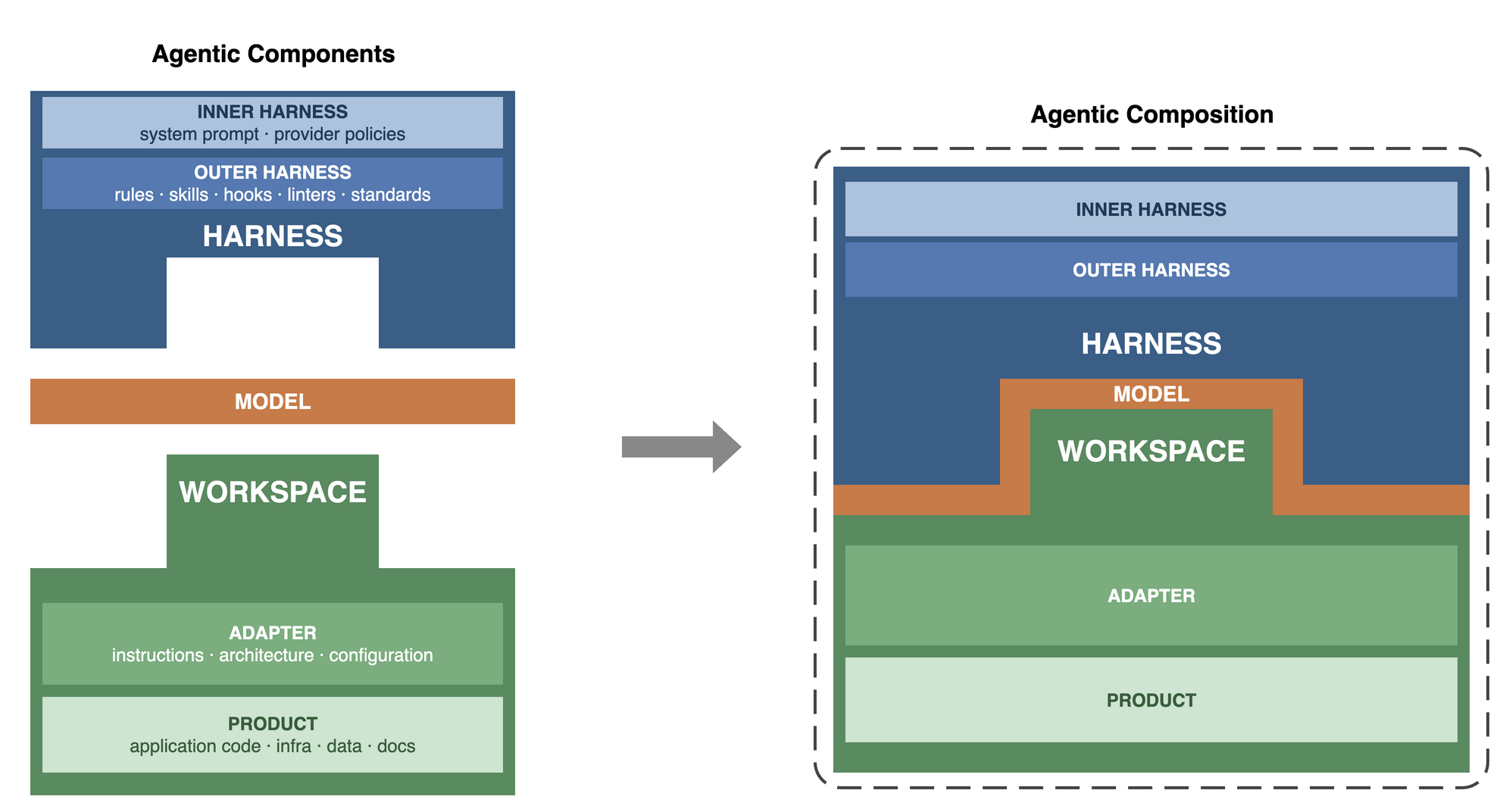

The paper expands the Agent+Model definition by describing an Agentic Composition that has three parts. The Model is the LLM in use. The Harness is instructions to the model. The Workspace is a project containing the assets being built.

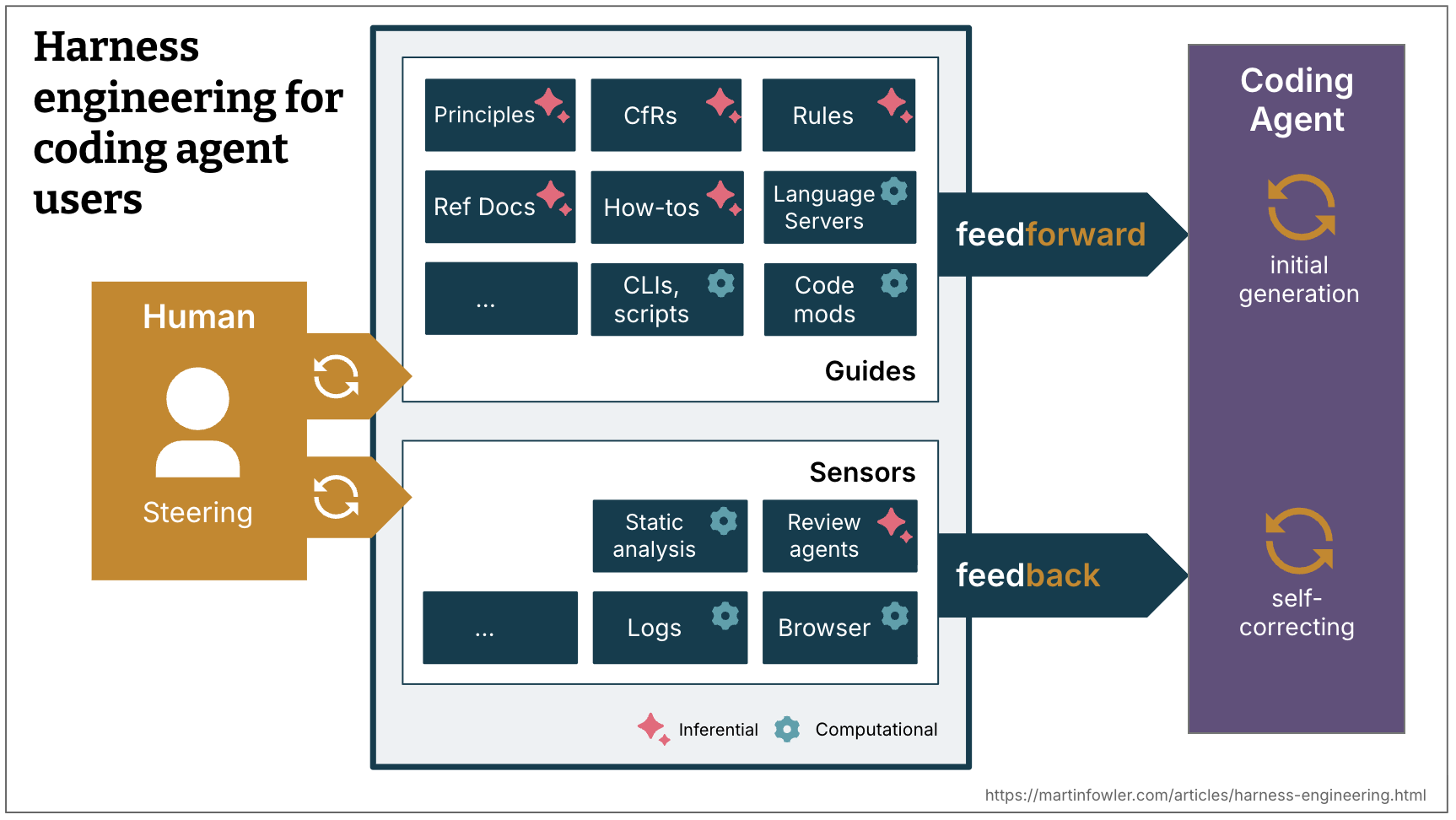

Agentic Compositions enable the use of control theory. Feedforward controls steer the Model before it acts. Feedback sensors measure the output and drive corrective action. The controls/sensors can be deterministic and computational with code like tests and linters. Or controls/sensors can be stochastic and inferential like AI assisted reviews.

Agentic Compositions are infrastructure. It is easy to conflate them with workflows, agentic teams, or business intent. Engineers WILL consider those elements in designing a system. They are not in scope of the Agentic Composition definition.

The Harness concept is model-neutral in theory but may have provider-specific idioms in practice. For example, all model providers support some form of rules files. Claude Code uses CLAUDE.md and Codex uses AGENTS.md.

Agentic Compositions are

- NOT Intent - Harnesses are infrastructure in concept. They are an idempotent resource composed from files. What the Harness does, its intent, is a different topic.

- NOT Workflows - Harness engineering does not describe the flow of work through a model or a group of models. Workflows and event driven architectures is a separate topic. Harness design and integration between Agentic Compositions will be a reflection of the overall system architecture.

- NOT Multiple agents - A Harness drives one instance of a model at a time. Agentic Compositions can be used together in multi-agent workflows and topologies as subcomponents of the larger system. One could make the case for a "meta-harness" that uses control theory on the whole system, but that is not the language being used in the industry as of early 2026.

- NOT Subagents - Agentic Compositions are a layer above subagents. Subagents can be an element within the Harness or Adapter.

What is the difference between Prompt, Context, and Harness engineering?

- Prompt engineering

- Writing a well structured input prompt to get a desired response in a single turn.

- Context engineering

- Keeping the model focused and undistracted by managing the content and size of the models working memory (context window) across multiple turns.

- Harness Engineering

- Arranging the knowledge, instructions, and tools available to a model to control its behavior.

Agentic Composition = Harness + Model + Workspace

An Agentic Composition has three parts. The Model is the LLM in use. The Harness carries the knowledge, instructions, and tools that tell the Model how to work. The Workspace is a project folder containing the assets being built. The Harness subdivides into an Inner Harness and an Outer Harness. The Inner Harness is what the provider ships like system prompts and built-in tools. The Outer Harness is what the engineer builds like rules, skills, and MCPs. The Workspace subdivides into an Adapter and a Product. The Adapter holds the files that make the Workspace AI-ready. The Product is the work asset being created or maintained. Different Harnesses applied to the same Workspace focus the model on different aspects of the Product. Harnesses and Workspaces can be independently developed and iterated.

- Harness - The knowledge, instructions, and tools used to guide the Model

- Inner Harness - The parts of the Harness that are static to the provider like system prompts, built-in tools and policies

- Outer Harness - The part of the Harness the engineer designs like knowledge, instructions, and tools

- Model - The LLM in use (Opus, Gemini, Qwen, etc.)

- Workspace - The project folder containing AI-ready files and the Product

- Adapter - Information provided to the Model about the Workspace and the Product

- Product - The work asset being created or maintained, the code

The Harness says "This is how to do work." The Workspace says "This is how to work with this asset."

It is helpful to look at a concrete example. The Harness example uses the author's daily-driver setup. The Workspace example uses an application written in go and hosted on kubernetes. The author uses Claude Code in two primary work modes (pairing and agentic jobs). This is an abbreviated example. The harnesses and adapters described here are hundreds of files, tools, and lines of instructions.

-

Harness

- Inner Harness

- Claude Code

- Opus system prompt

- Anthropic's provider-side policies

- Built in claude code tools like Bash() and Grep()

- Outer Harness

CLAUDE.md- Go language rules

- Context7 and Google MCPs

- Serena LSP MCP and its configuration files

- go, kubectl and markdownlint binaries and their configuration files

/docsand/review-deepskills- files with secrets like API keys

-

Workspace (git repo)

- Adapter

CLAUDE.md.llmdocswith files likearchitecture.md,api.md, anddata-model.md.claude/skillsand.claude/rules.serenafoldermise.toml(variable, tool, secret, and build definitions)- Product

README.md(for humans)src/api,src/ui,src/clicode foldersinfrafoldertestsfolder with integration, smoke, and e2e tests

The following abbreviated directory trees show the layout on the filesystem. The two trees share similar files like CLAUDE.md but with different semantics. The Harness CLAUDE.md says to use the London school of TDD and red-green-refactor. The platform's Adapter CLAUDE.md says how to run the go test suites and when to run which suite.

Harness, the author's dotfiles for daily-driver work

/home/vscode

├── .claude

│ ├── agents

│ │ ├── review-quality.md

│ │ ├── review-security.md

│ │ └── review-testing.md

│ ├── CLAUDE.md

│ │── hooks

│ │ └── stop-phrase-guard.sh

│ │── rules

│ │ ├── bash.md

│ │ ├── go.md

│ │ ├── lsp-serena.md

│ │ ├── md-syntax.md

│ │ └── python.md

│ └── skills

│ ├── docs.md

│ ├── review-deep.md

│ └── review-quick.md

├── .prettierrc

└── .secrets

├── ai.env

├── context7.env

├── google.env

└── sonarqube.envWorkspace, the platform git repo

platform

├── .claude

│ ├── commands

│ ├── settings.local.json

│ └── skills

├── .llmdocs

│ ├── api.md

│ ├── architecture.md

│ ├── data-model.md

│ └── deployment.md

├── .llmtmp

│ ├── notes.md

│ ├── plans

│ └── specs

├── .mise.toml

├── .serena

├── CLAUDE.md

├── infra

│ ├── backend.tf

│ └── main.tf

├── README.md

├── secrets

│ └── secrets.enc.yaml

├── src

│ ├── app-api

│ ├── app-cli

│ └── app-ui

└── tests

├── e2e

├── fixtures

└── integrationThe bounded contexts between Harness and Workspace are not as clean as they might look in the diagrams and examples. Take traditional tests for example (unit, e2e, etc.). Tests in the pipeline are a human construct to assure non-functional requirements (quality, security, performance). Tests from the Model's perspective are a feedback sensor AND part of the Product. Another example is the system prompt. A practitioner may override the Anthropic system prompt, stripping fluff like marketing ("You are the Claude Platform") and indemnifications ("If a user shows signs of an eating disorder[...]"). When left alone, the system prompt is an Inner Harness. When customized, it is an Outer Harness. There is some fluidity in the definitions and categories.

Harness Workspace Composability

A Harness and Workspace combined forms an Agentic Composition. New Agentic Compositions can be formed by combining different Harnesses with different Workspaces. A Harness can be swapped across Workspaces. A Workspace can be operated by different Harnesses.

For this example, it is easiest to think of the Harness as a persona, something with a particular talent or focus area like coding, security, or documentation. This is subtly different than subagents. Subagents steer the Model to favor domain-specific words by shifting probabilities based on word relationships. A "security specialist" agent will have higher probabilities toward things like "OWASP" or "SQL Injection." A "software architect" agent will favor "Domain Driven Design" or "SOLID". Swapping a Harness does that too and then some. It can also swap system prompts, tools, configurations, identities, or skills. For example, a security Harness would have the security persona, threat modeling skills, and tools like SAST/DAST. A compliance Harness would have an auditor persona, skills for HIPAA, access to audit evidence, and the ability to write to a compliance register.

Outcomes, Control Theory

Control theory is a field of engineering concerned with developing systems that increase the stability and optimality of processes. Applied to LLMs, it means designing the Harness and Workspace to drive the Model toward a desired outcome (the set point). There are two types of control: open-loop control (feedforward) and closed-loop control (feedback). A Harness can use both simultaneously. Feedforward controls steer the Model before it acts. Feedback sensors measure the output, produce an error signal (the gap between desired and actual outcome), and drive corrective action. The system terminates when the error signal is acceptable or when a human intervention is required.

In more pragmatic terms, the Harness and Adapter tell the Model, "This is what you need to do your job" in the feedforward stage. Then Harness and Adapter tell the operator or the Model "This is how it turned out" in the feedback stage. If the outcome does not match the set point, the controller can choose to correct in the closed loop or escalate to a human for intervention in the openloop.

Consider a simple example of telling a model to create a new function in a Product.

The feedforward control says

- how to write functions (language idioms, code standards)

- use London school TDD (mock first)

- use red-green-refactor approach to TDD (failing test first)

- where to write the function in this product (the architecture)

- how to write tests in this product

- how to run tests in this product

- what passing tests means

The feedback control says

- whether the new function and new tests meets coding standards

- whether new function passes the new tests

- whether the tests adequately address the requirements

- what to do if there is an error; try to fix it or escalate to the human

Credit Birgitta Böckeler, Thoughtworks

Credit Birgitta Böckeler, Thoughtworks

Harnesses in Practice

The platform runs agent workloads in Kubernetes. A workload container is the platform's compute unit. It takes three git repos as input and chains them at startup through a three-layer bootstrap. This is an example of an implementation of Agentic Composition. The platform does not provide workflow. The platform only provides Agent Composition, the infrastructure. Workflows could run on the platform using a workflow engine (LangChain, Dify) or autonomous agents (OpenClaw, OpenFang).

| Layer | Repo | Maps to |

|---|---|---|

| 1 | Bootstrap repo (public, encrypted) | Basic credentials like SSH keys, secret passphrases |

| 2 | Dotfiles repo (private) | Outer Harness: shell config, tool settings, MCP servers, agent skills, encrypted secrets |

| 3 | Workspace repo (private) | Workspace: Adapter and Product |

The agent tooling (Claude Code, OpenCode) and the provider (Anthropic, OpenAI) form the inner harness. The bootstrap and dotfiles repos together form the Outer Harness. Layer 1 delivers basic credentials. Layer 2 installs the dotfiles repo, which carries the persona's shell config, tool settings, agent rules, and secrets. Layer 3 clones the workspace repo into the container's working directory.

The platform calls this combination a persona. A platform persona carries infrastructure provisioning tools and cloud config. A data persona carries pipeline orchestration and data-quality rules. A security persona carries vulnerability scanners and threat modeling skills. Swapping the dotfiles repo URL in the container config swaps the Harness. Swapping the workspace repo URL retargets the agent at a different codebase. The Model runs inside the container.

Workload containers are ephemeral. Building a container from the same three inputs produces the same starting state.

The dotfiles repo and the workspace repo evolve independently. The dotfiles repo gains a new MCP server or a refined skill on its own schedule. The workspace repo gains new code, tests, or Adapter files on its own schedule. Neither repo knows about the other. The contract between them is shallow. That shallow contract is what makes them composable. A workload container brings them together at startup and gives them cohesion for the duration of the container's lifecycle.

References

- The full version of this paper covers additional topics including deterministic vs stochastic controls, workflow topologies, and event-driven coordination patterns.

- Boeckeler, B. (2026, April 2). Harness engineering for coding agent users. martinfowler.com. Defines Agent = Model + Harness and introduces feedforward guides and feedback sensors as the two control types, each either computational or inferential.

- Young, J. (2025, November 26). Effective harnesses for long-running agents. Anthropic. Addresses agent state loss across context windows with an initializer/coding agent pattern.

- LangChain. (2026, March 11). The anatomy of an agent harness. langchain.com. Breaks down the harness into filesystems, sandboxes, bash/code tools, memory, and search.

- Rajasekaran, P. (2026, March 24). Harness design for long-running application development. Anthropic. Introduces a generator/evaluator architecture inspired by GANs.

- Lopopolo, R. (2026, February 11). Harness engineering: leveraging Codex in an agent-first world. OpenAI. Documents building a product with zero manually-written code.

- Huntley, G. (2025, July 14). Ralph Wiggum as a "software engineer". Describes the "Ralph loop": a bash while-loop that feeds a prompt into a coding agent continuously.

- GitHub does dotfiles. dotfiles.github.io. Community guide to managing configuration files through GitHub repositories.

- Athalye, A. Dotbot. GitHub. Lightweight dotfiles bootstrapper that symlinks, creates directories, and runs shell commands from a YAML manifest.

- Jones, A. (2026). Building an AI native engineering org. AI Summit Spring 2026, IT Revolution. (freewalled)

- Control theory. Wikipedia. Defines open-loop (feedforward) and closed-loop (feedback) control, set points, error signals, controllers, and sensors.