Harness Engineering is using a durable set of components to apply an LLM to an AI-ready Workspace.

Harness Engineering is the practice of managing the inputs, outputs and sensors surrounding a large language model using control theory to reach a desired outcome. The concepts are nearly 200 years old with James Maxwell describing the use of governors to control the speed of steam engines. The new steam engine is the LLM. Birgitta Böckeler of Thoughtworks defines a Harness as "everything but the model."

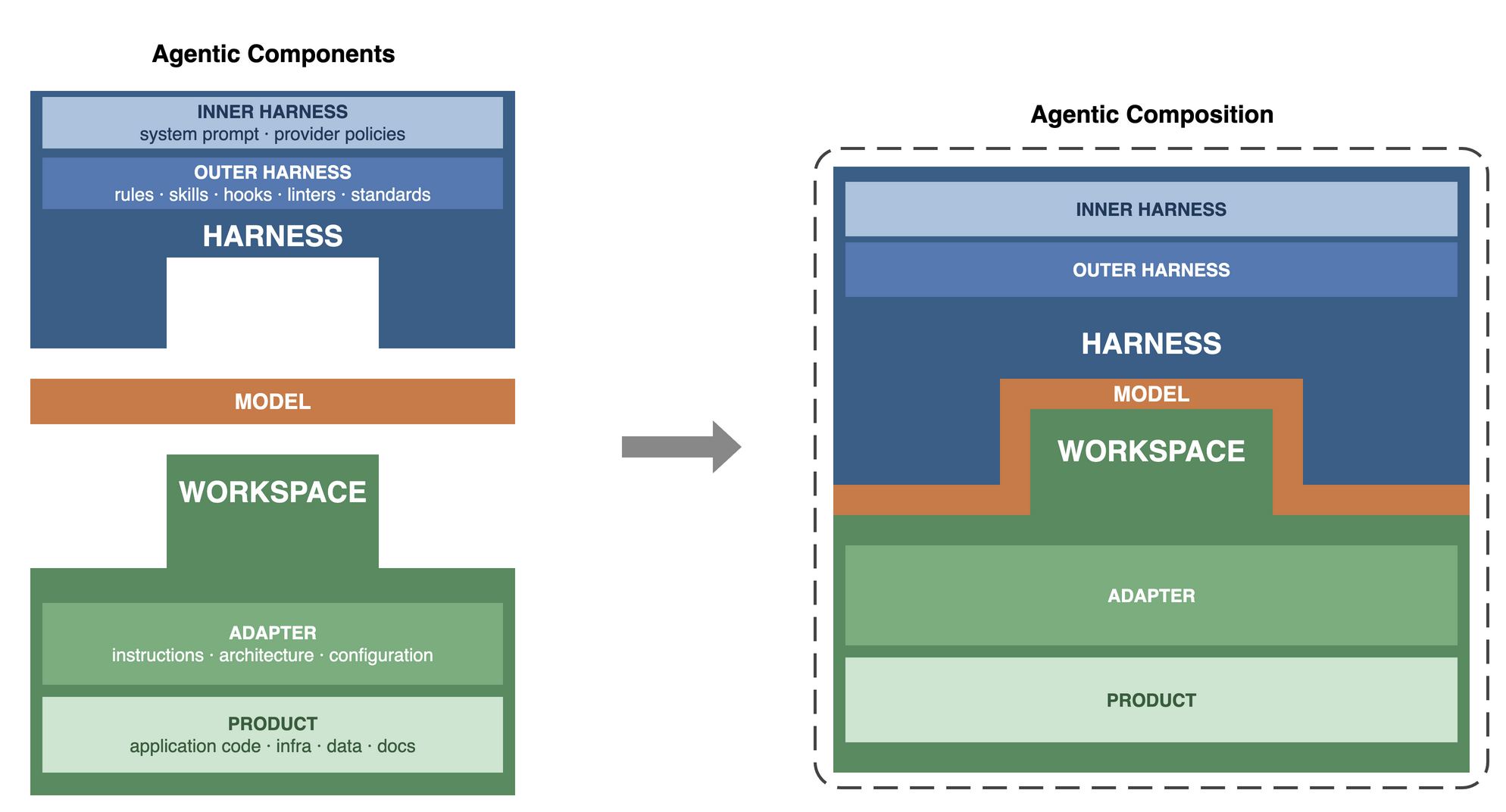

An Agentic Composition has three parts, Harness, Model, and Workspace. The Harness carries the persona, tools, identities, and rules that tell the Model how to work. The Workspace is a project folder. It contains an Adapter and a Product. The Adapter holds the files that make the project AI-ready. The Product is the code, data, or docs being built. The Harness is swappable. The Workspace is fixed. Different Harnesses applied to the same Workspace focus the agent on different aspects of the Product.

The Harness splits into an Inner Harness and an Outer Harness. The Inner Harness is what the provider ships. System prompts, built-in tools, policies. The Outer Harness is what the engineer builds. Rules, skills, hooks, MCPs, linters. Both layers use control theory. Feedforward controls steer the Model before it acts. Feedback sensors measure the output and drive corrective action. The controls can be deterministic like tests and linters or stochastic like AI code reviews. This framing separates Harness Engineering from prompt engineering and context engineering. Prompt engineering is single-turn phrasing. Context engineering is token curation. Harness Engineering is the full system of controllers and sensors across turns, sessions, and compositions.

This paper defines an Agentic Composition model and how Harnesses and Workspaces compose. It applies control theory to LLM workflows and distinguishes deterministic from stochastic controls. This paper uses a real Harness and an internal platform as a Workspace to ground the concepts. The paper skews heavily toward engineers and software projects. The paradigm applies beyond that though. A Product Manager could easily have a harness and workspace for a Product idea. Or a Project Manager could have a TPM harness with Workspaces for each of their teams

Agentic Composition = Harness + Model + Workspace

- Harness - The persona, behaviors, tools, and identities used to guide the Model

- Inner Harness - The parts of the Harness that are static to the provider like system prompts, built-in tools and policies

- Outer Harness - The part of the Harness the engineer designs like rules, tools and skills

- Model - The LLM in use (Opus, Gemini, Qwen, etc.)

- Workspace - The project folder containing AI-ready files and the Product

- Adapter - Information provided to the Model about the Workspace and the Product

- Product - The work asset being created or maintained, the code

The Harness says "This is our way of doing things." The Workspace says "This is how to work with this asset."

It is helpful to look at a concrete example. The following uses the author's daily-driver setup as the Harness and an internal platform for hosting agentic workloads as the Workspace. The example runs Claude Code in two primary work modes (pairing and agentic jobs). This is an abbreviated example. The harnesses and adapters described here are hundreds of files, tools, and lines of instructions.

- Harness

- Inner Harness

- Opus system prompt

- Server-side policies

- Built in tools like Bash() and Grep()

- Outer Harness

CLAUDE.md- Go language rules

- Context7 and Google MCPs

- Serena LSP and its configuration files

- go, kubectl and markdownlint binaries and their configuration files

/docsand/review-deepskills.envsecrets files

- Workspace (git repo)

- Adapter

CLAUDE.md.llmdocswith files likearchitecture.md,api.md, anddata-model.md.claude/skillsand.claude/rules.serenafoldermise.toml(variable, tool, and build definitions)- Product

README.md(for humans)src/api,src/ui,src/clicode foldersinfrafoldertestsfolder with integration, smoke, and e2e tests

The following abbreviated directory trees show the layout on the filesystem. The two trees share similar files like CLAUDE.md but with different semantics. The Harness CLAUDE.md says to use the London school of TDD and red-green-refactor. The platform's Adapter CLAUDE.md says how to run the go test suites and when to run which suite.

Harness, the author's homefolder

/home/vscode

├── .claude

│ ├── agents

│ │ ├── review-quality.md

│ │ ├── review-security.md

│ │ └── review-testing.md

│ ├── CLAUDE.md

│ │── hooks

│ │ └── stop-phrase-guard.sh

│ │── rules

│ │ ├── bash.md

│ │ ├── go.md

│ │ ├── lsp-serena.md

│ │ ├── md-syntax.md

│ │ └── python.md

│ └── skills

│ ├── review-deep.md

│ └── review-quick.md

├── .prettierrc

└── .secrets

├── ai.env

├── context7.env

├── google.env

└── sonarqube.envWorkspace, the platform git repo

platform

├── .claude

│ ├── commands

│ ├── settings.local.json

│ └── skills

├── .llmdocs

│ ├── api.md

│ ├── architecture.md

│ ├── data-model.md

│ ├── deployment.md

│ └── ops.md

├── .llmtmp

│ ├── notes.md

│ ├── plans

│ └── specs

├── .serena

├── CLAUDE.md

├── infra

│ ├── backend.tf

│ ├── entra.tf

│ └── main.tf

├── README.md

├── secrets

│ └── secrets.enc.yaml

├── src

│ ├── app-api

│ ├── app-cli

│ └── app-ui

└── tests

├── e2e

├── fixtures

└── integrationThe bounded contexts in these categories are not as clean as they might look in the diagrams and examples. Take tests for example. Tests in the pipeline are a human construct to assure non-functional requirements (quality, security, performance). Tests from the Model's perspective are a feedback sensor AND a Product. Another example is the system prompt. A practitioner may override the Anthropic system prompt, stripping fluff like marketing ("You are the Claude Platform") and indemnifications ("If a user shows signs of an eating disorder[...]"). When left alone, the system prompt is an Inner Harness. When customized, it is an Outer Harness. There is some fluidity in the definitions and categories.

Harness Workspace Composability

A Harness and Workspace combined forms an Agentic Composition. New Agentic Compositions can be formed by combining different Harnesses with different Workspaces. A Harness can be swapped across Workspaces. A Workspace can be operated by different Harnesses.

For this example, it is easiest to think of the Harness as a persona, something with a particular talent or focus area like coding, security, or documentation. This is subtly different than agents. Agents condition the Model to favor domain-specific outputs by shifting the probability distribution over tokens. A "security specialist" agent will have higher probabilities toward things like "OWASP" or "SQL Injection." A "software architect" agent will favor "Domain Driven Design" or "SOLID". Swapping a Harness does that too, and it can also swap system prompts, tools, configurations, identities, or skills. For example, a security Harness would have the security persona, threat modeling skills, and tools like SAST/DAST. A compliance Harness would have an auditor persona, skills for HIPAA, access to audit evidence, and the ability to write to a compliance register.

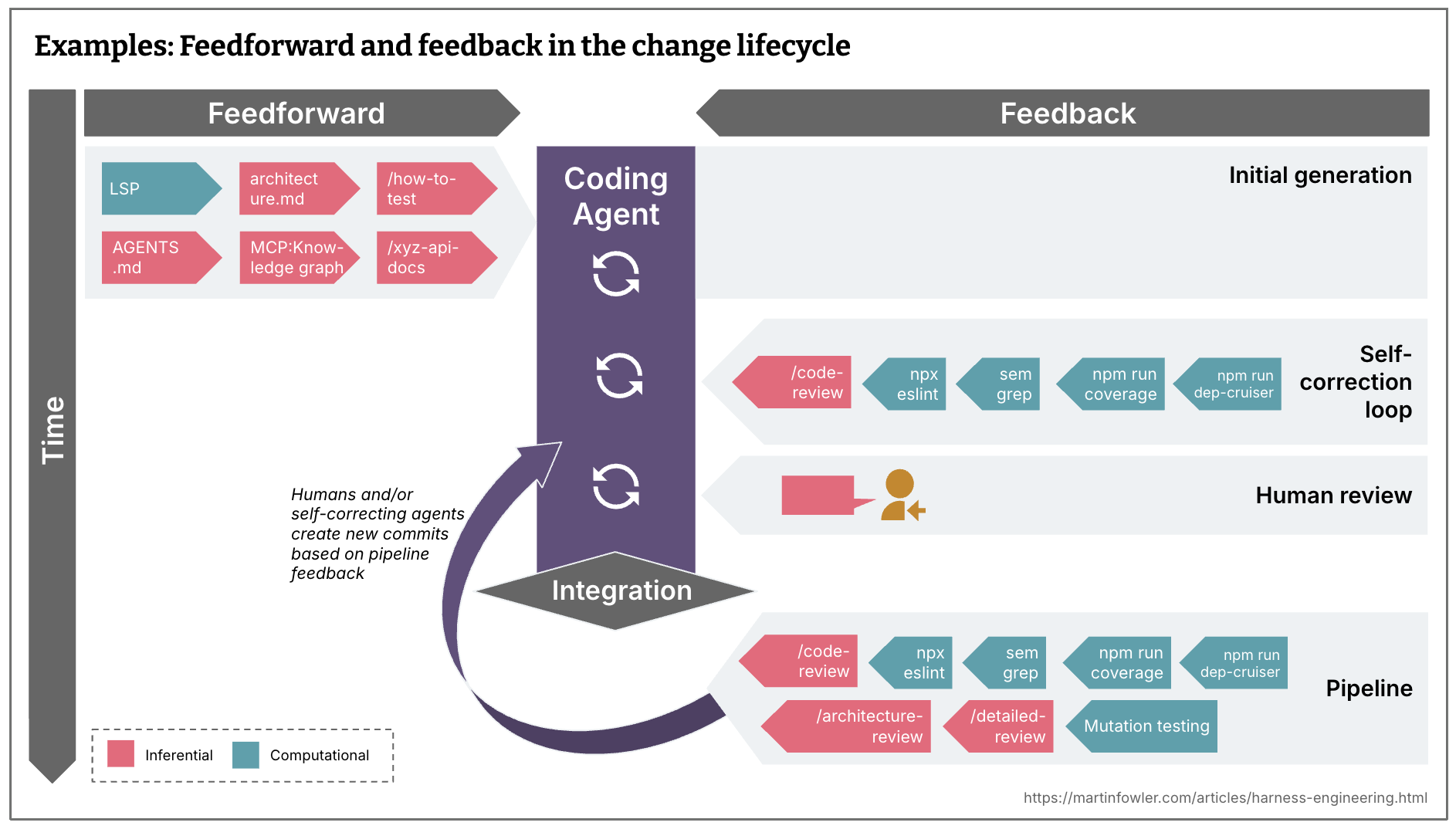

Outcomes, Control Theory

Control theory is a field of engineering concerned with developing systems that increase the stability and optimality of processes. Applied to LLMs, it means designing the Harness and Workspace to drive the Model toward a desired outcome (the set point). There are two types of control: open-loop control (feedforward) and closed-loop control (feedback). A Harness uses both simultaneously. Feedforward controls steer the Model before it acts. Feedback sensors measure the output, produce an error signal (the gap between desired and actual outcome), and drive corrective action in the next iteration. The system terminates when the error signal is acceptable or when a human intervention is required.

Feedforward is the Harness telling the Model how to operate. Feedback is the Harness measuring whether the Model achieved the desired outcome. The error signal from the feedback loop drives the controller to adjust the next iteration. A code review calling for a refactor or a security review calling for a data handling change are examples of error signals feeding back into the process.

In more pragmatic terms, the Harness and Adapter tell the Model, "This is what you need to do the job" in the feedforward stage (rules, tools, skills). Then, in the feedback stage, the Harness and Adapter tell the operator or the Model "This is how it turned out" (sensors like tests, linters, and reviews). If the outcome does not match the set point, the controller can choose to correct in the closed loop or escalate to a human for intervention.

Consider a simple example of creating a new function, using TDD. The Harness tells the Model to use London school (mock first) and to use red-green-refactor (write a failing test, code until the test passes). The feedforward control says, "This is the function to create and how it should behave. Write a failing test. Then write code to make the test pass." The feedback sensor, receiving the new function and test, runs the test and produces an error signal: pass or fail. If the test passes, the error signal is zero and the set point is reached. If the test fails, the error signal drives another iteration. If the controller cannot resolve the error, the workflow escalates to a human for intervention.

Credit Birgitta Böckeler, Thoughtworks

Credit Birgitta Böckeler, Thoughtworks

Deterministic vs Stochastic, Computational vs Inferential

A Harness and Adapter have deterministic and stochastic controls. They also have computational and inferential controls. These two axes are independent and produce four combinations.

Deterministic controls produce the same output for the same input. A test suite, a linter, or a code coverage measurement. Stochastic controls produce variable outputs. An AI code review or a Generative Adversarial Network (GAN) evaluator.

Computational controls execute defined logic. Code that counts lines, parses a file, or checks a value against a threshold. Inferential controls use a Model to reason about the output. An LLM reviewing code for architectural fitness or a Model judging whether a commit message is meaningful.

Not all stochastic controls are inferential. A heuristic that estimates token count at 4 characters per token is stochastic and computational. It guesses, but no Model is involved. An AI code review is stochastic and inferential. It guesses, and a Model does the guessing. A linter is deterministic and computational. It checks rules with code. A Model that verifies a regex matches a specification is deterministic and inferential. The answer is binary, but the Model reasons to reach it.

All four combinations are tools to meet the non-functional requirements.

Workflows: Brokers vs Mediators, Orchestration vs. Choreography

This paper describes the Harness and Workspace as atomic units that can create Agentic Compositions as the composable unit. What has NOT been described is chaining Agentic Compostions together into workflows. This paper does not cover event driven architecture in detail. It is an old problem in computing. LLMs have not fundamentally changed the tradeoffs. There is ample reading available on the subject. It is, however, worth discuss some common patterns as it relates to designing workflows that combine Agentic Compositions. Because the engineer will face the same challenges that were faced with microprocessor design, software components, distributed computing, and microservices. Hard problems like coupling, cohesion, ordering, consistency, partitioning, etc.

The fundamental concepts when thinking about workflows are about ordering and dependencies between components (Agentic Compositions in this case). When chaining components into workflows these are some useful ideas to get started.

Topologies

- Broker Topology - A lightweight message broker passes events between components. Each component independently reacts to events and emits new ones. No single component knows the full workflow. Useful when coordination is minimal or emergent.

- Mediator Topology - A central mediator (manager) receives an event and then follows predefined steps to notify components of the event. Useful when the sequence of steps requires coordination.

Coordination

- Orchestration - The components in the system are managed by some kind of central agent. Like a conductor directing an orchestra. Components are not aware of each other.

- Choreography - The components in the system each respond independently to events. Like dancers on a stage who each know their own movements and respond to cues. Coordination emerges from the event flow rather than from a central authority.

How the Platform Approaches Harnesses

The platform runs agentic workloads in Kubernetes. A workload container is the platform's compute unit. It takes three git repos as input and chains them at startup through a three-layer bootstrap. The platform does not provide workflow. The platform only provides Agent Composition. Workflows could leverage the platform using a workflow engine like LangChain or Dify.

| Layer | Repo | Maps to |

|---|---|---|

| 1 | Bootstrap repo (public, encrypted) | Credentials: SSH keys, secret passphrases |

| 2 | Dotfiles repo (private) | Outer Harness: shell config, tool settings, MCP servers, agent skills, encrypted secrets |

| 3 | Workspace repo (private) | Workspace: Adapter and Product |

The agent tooling (Claude Code, OpenCode) and the provider (Anthropic, OpenAI) form the inner harness. The bootstrap and dotfiles repos together form the Outer Harness. Layer 1 delivers credentials. Layer 2 installs the dotfiles repo, which carries the persona's shell config, tool settings, agent rules, and secrets. Layer 3 clones the workspace repo into the container's working directory.

The platform quickstart calls this combination a persona. A platform persona carries infrastructure provisioning tools and cloud config. A data persona carries pipeline orchestration and data-quality rules. A security persona carries vulnerability scanners and threat modeling skills. Swapping the dotfiles repo URL in the container config swaps the Harness. Swapping the workspace repo URL retargets the agent at a different codebase. The Model runs inside the container.

Workload containers are ephemeral and idempotent. Any container can be destroyed and rebuilt from the same three inputs.

The dotfiles repo and the workspace repo evolve independently. The dotfiles repo gains a new MCP server or a refined skill on its own schedule. The workspace repo gains new code, tests, or Adapter files on its own schedule. Neither repo knows about the other. The contract between them is shallow: the dotfiles repo expects a workspace at a known path, and the workspace expects certain tools and abilities to be present. That shallow contract is what makes them composable. A workload container brings them together at startup and gives them cohesion for the life of the session.

What is the difference between Prompt, Context, and Harness engineering?

- Prompt engineering - constructing the correct syntax and phrases in a prompt to align the model to the desired outputs in a single turn.

- Context engineering - curating the smallest possible set of high-signal tokens such that sequences of turns maintain fidelity toward the goal

- Harness Engineering - composing the controllers, sensors, and frameworks (rules, tools, skills) needed to steer Models to a desired set point, sometimes across many turns, sessions, and Agentic Compositions

Looking Forward

The industry is turning Harnesses and Workspaces into cloud primitives. Dify and LangChain both offer hosted options. The Frontier providers are working on the same. This follows the same trajectory that turned servers and databases into IaaS. The patterns described in this paper will increasingly be off the shelf components and managed services rather than hand-assembled repos. There will be a proliferation of competitive offerings.

The conversation is evolving rapidly. Most of the writing on Harness Engineering is less than a year old. The Boeckeler, Anthropic, and OpenAI articles in the references were all published between late 2025 and early 2026. The vocabulary is still forming.

User Harnesses are deeply personal. There are countless dotfiles repos, agent management suites, and skills collections available. New marketplaces and plugins are emerging everyday. There is no right way, just as there is no right way to organize a laptop or which text editor is "best". The modes of interaction are also a preference. Terminal agents like Claude Code, IDE copilots, desktop agents like Claude for Desktop, and web-based agents each shape the Harness differently. A practitioner's Outer Harness reflects their workflows, preferences, and the specific problems they solve.

A common language will emerge. Right now every group invents its own terms for the same concepts and reinvents old concepts. As the field matures, shared vocabulary and shared patterns will consolidate, the same way design patterns did for object-oriented programming and architectural patterns did for distributed systems.

Conclusion

The Model is the easy part. Picking Opus or Gemini or Qwen takes a few minutes. The hard part is everything around it. The Harness, the Workspace, the Adapter, the feedback sensors, the controls, the shallow contracts between independently evolving repos. That surrounding system is what determines whether the Model produces reliable work or expensive noise.

Harness Engineering is not new. Control theory is 200 years old. Dotfiles are decades old. Event-driven architecture, broker vs mediator, orchestration vs choreography are all solved problems with lots of good books. LLMs did not invent any of this. What LLMs did is make it matter more because the thing being controlled is stochastic. And a stochastic system without a governor can be risky and costly.

The Agentic Composition model gives practitioners a vocabulary for the pieces. The Harness carries the how. The Workspace carries the what. The Model sits between them. Composability comes from keeping the contract between Harness and Workspace shallow. Workflows come from chaining compositions together using the same patterns that distributed systems have used for decades.

The engineer who builds a good Harness will get more from a weaker Model than the engineer who throws a strong Model at a bare repo. That is the thesis. The rest is practice.

References

-

Boeckeler, B. (2026, April 2). Harness engineering for coding agent users. martinfowler.com.

https://martinfowler.com/articles/harness-engineering.html

Defines Agent = Model + Harness and introduces feedforward guides and feedback sensors as the two control types, each either computational or inferential. Proposes three regulation categories (maintainability, architecture fitness, behaviour) and the concept of harnessability as a property of the codebase itself. -

Young, J. (2025, November 26). Effective harnesses for long-running agents. Anthropic.

https://www.anthropic.com/engineering/effective-harnesses-for-long-running-agents

Addresses agent state loss across context windows with an initializer/coding agent pattern. The harness structures how agents discover work, make incremental progress, and hand off context through artifacts like feature lists and progress files. -

Rajasekaran, P. (2026, March 24). Harness design for long-running application development. Anthropic.

https://www.anthropic.com/engineering/harness-design-long-running-apps

Introduces a generator/evaluator architecture inspired by GANs. Separating the agent doing the work from the agent judging it is a strong lever. Every harness component encodes an assumption about what the model cannot do alone; those assumptions go stale as models improve. -

Lopopolo, R. (2026, February 11). Harness engineering: leveraging Codex in an agent-first world. OpenAI.

https://openai.com/index/harness-engineering/

Documents building a product with zero manually-written code. Treats the repository as the primary interface for agent legibility: structured docs as system of record, custom linters enforcing architecture, and recurring "garbage collection" agents that scan for drift. Conclusion: "Our most difficult challenges now center on designing environments, feedback loops, and control systems." -

Huntley, G. (2025, July 14). Ralph Wiggum as a "software engineer".

https://ghuntley.com/ralph/

Describes the "Ralph loop": a bash while-loop that feeds a prompt into a coding agent continuously. One task per loop, minimal context window usage, eventual consistency over correctness per iteration. Back pressure through tests, type checkers, and static analyzers rejects invalid generations. Iterative tuning of the prompt replaces direct coding. -

GitHub does dotfiles. dotfiles.github.io.

https://dotfiles.github.io/

Community guide to managing configuration files through GitHub repositories. Covers backup, restore, and sync of developer toolbox settings. The Outer Harness is a dotfiles repo with LLM-specific files added. -

Athalye, A. Dotbot. GitHub.

https://github.com/anishathalye/dotbot

Lightweight dotfiles bootstrapper that symlinks, creates directories, and runs shell commands from a YAML manifest. A singlegit clone && ./installstands up an Outer Harness on a new machine. -

Jones, A. (2026). Building an AI native engineering org. AI Summit Spring 2026, IT Revolution. (freewalled)

https://videos.itrevolution.com/watch/1183220322

Walks through converting a traditional engineering org into an AI-native one. Key takeaway for this paper is the agent-friendly repository as the unit of readiness. -

Wolfram, S. (2023, February 14). What is ChatGPT doing... and why does it work? Stephen Wolfram Writings.

https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work/

Explains transformer mechanics from token prediction through attention heads and neural net layers. Grounding for why system prompts shift output distributions through fixed weights rather than changing them. -

Osmar, N. (2026, February 12). How large language models work: an easy explanation. AI-Consciousness.Org.

https://ai-consciousness.org/how-large-language-models-work-from-base-models-to-conversations/

Describes how context shapes activation patterns through frozen weights. Covers base models, conversation threading, and Anthropic's circuit tracing research. -

Ford, N., Richards, M., Sadalage, P., & Dehghani, Z. (2021). Software architecture: The hard parts. O'Reilly Media. ISBN 978-1-4920-8689-5

Covers workflow coordination patterns for distributed systems: broker vs mediator topologies, orchestration vs choreography, and saga patterns for managing distributed transactions. Source material for the orchestration and coordination concepts applied in this paper. -

Hohpe, G. & Woolf, B. (2003). Enterprise integration patterns. Addison-Wesley. ISBN 978-0-321-20068-3

Catalogs messaging patterns for integrating enterprise systems: message channels, routers, translators, and endpoints. Source material for the event-driven architecture concerns and patterns applied in this paper. -

Richards, M. & Ford, N. (2020). Fundamentals of software architecture. O'Reilly Media. ISBN 978-1-4920-4345-4

Defines broker and mediator topologies for event-driven architecture. The broker topology uses a dumb pipe with no central coordination. The mediator topology uses a central coordinator that knows the workflow steps. Source for the topology definitions in this paper. -

Mendonca, N. et al. (2026). Making sense of AI agents hype: adoption, architectures, and takeaways from practitioners. arXiv 2604.00189.

https://arxiv.org/html/2604.00189v1

Survey of 234 practitioner talks on AI agent architectures. Confirms that agentic systems face the same distributed systems challenges as traditional microservices: coordination, reliability, ordering, and tool integration. -

LangChain. langchain.com.

https://www.langchain.com/

Open-source framework for building AI agents with workflow orchestration, tool integration, and memory. Example of a workflow tool that sits above the Agentic Composition layer and chains compositions together. The platform does not provide this layer. -

Dify. dify.ai.

https://dify.ai/

Open-source agentic workflow builder with no-code orchestration, RAG pipelines, and multi-model integration. Example of a workflow tool that sits above the Agentic Composition layer and chains compositions together. The platform does not provide this layer. -

Non-functional requirement. Wikipedia.

https://en.wikipedia.org/wiki/Non-functional_requirement

Defines non-functional requirements as criteria for judging system operation rather than specific behaviors. Source for the quality, security, and performance attributes that Harness sensors measure. -

ISO/IEC 25010. ISO 25000.

https://iso25000.com/index.php/en/iso-25000-standards/iso-25010

Software product quality model with nine characteristics and sub-characteristics. Provides the formal taxonomy for the non-functional requirements that feedback sensors evaluate. -

Control theory. Wikipedia.

https://en.wikipedia.org/wiki/Control_theory

Defines open-loop (feedforward) and closed-loop (feedback) control, set points, error signals, controllers, and sensors. Primary source for the control theory vocabulary used throughout this paper. -

James Clerk Maxwell. Wikipedia.

https://en.wikipedia.org/wiki/James_Clerk_Maxwell

Published "On Governors" in 1868, the first mathematical analysis of feedback control. Described how a centrifugal governor stabilizes steam engine speed, founding the formal study of control theory.

🖋️ with 💛 by a real 🧔♂️